Full Transcript of Nvidia Analyst Call | What Trump Card Did Jensen Huang Present to Wall Street at the GTC Conference?

404K is a curated knowledge community focused on global technology, AI, semiconductors, cloud computing, and capital markets. Here, investment bank reports, homepage summaries, closed-door meeting highlights, macro charts, in-depth subscription translations, video transcripts, and audio-to-text content are updated daily, covering US stocks and frontline technology trends overseas.

The complete original text has been published on Knowledge Planet, welcome to join.

During NVIDIA's GTC Financial Analyst Q&A, CEO Jensen Huang shared profound insights into AI computing, the transformation of industry business models, and NVIDIA's strategic moat. The core points of the session can be summarized in the following six key aspects:

1. Targeting the Multi-Trillion-Dollar Market Demand NVIDIA is extremely confident in the continued explosive growth of the market. Huang made it clear that just the Blackwell and Ruben architectures alone give NVIDIA strong visibility and confidence in a market demand exceeding $1 trillion, and this number continues to grow with the development of new clients, markets, and geographies.

2. The Traditional IT Industry Will Be Reshaped, and the Token Economy Becomes the Core Future Business Model Huang advanced a disruptive industry forecast: the existing $2 trillion IT software licensing industry will not be destroyed, but reshaped and dramatically expanded (potentially to $8 trillion). In the future, IT companies will no longer sell software licenses, but instead rent out and generate Tokens. 100% of IT companies worldwide will integrate OpenAI, Anthropic, or open-source models, becoming Token resellers, signifying a fundamental transformation of the underlying business model.

3. AI Agents Will Enable a New Norm of 24/7 Compute Consumption In the past, computers spent much of their time idle, but in the future, computers will run 24/7 continuously, because AI agents will automatically perform tasks in the background and constantly generate Tokens. Agent systems are extremely complex; they need to learn autonomously, call tools (such as web browsers), and generate sub-agents, all of which put extremely high demands on structured and unstructured data processing, driving demand for comprehensive accelerated computing (including CPUs and various types of GPUs) to new heights.

4. Physical AI Will Trigger a Real-Economy Revolution Far Beyond the Digital World Currently, AI is concentrated in the cloud and the digital domain, but Huang pointed out that when physical AI reaches an inflection point, AI will enter factories, the edge, and specific physical locations. Because the size of the real-world, atom-related physical industry (about $70 trillion) far exceeds that of the pure digital sector, physical AI will drive rapid growth in business on the non-hyperscale side (i.e., enterprise on-prem and industrial deployment), potentially becoming the dominant portion in the future.

5. NVIDIA's Ultimate Moat: Selling the Entire "Full-Stack AI Factory," Not Just Chips Huang emphasized that attempting to compete with NVIDIA using a single low-cost chip is futile because **“customers don't buy chips; they buy platforms.”** NVIDIA is currently the only company in the world capable of optimizing a unified architecture across multiple types of memory (HBM, LPDDR5, SRAM). By fully controlling chips, networking, storage, and the entire software stack, NVIDIA can build a harmonious and unified "AI factory" and maintain an extremely rigorous pace of system architecture upgrades, “once a year”—unattainable by rivals who merely piece together technologies.

6. Inference Is the Endgame for AI Monetization, but Post-Training Compute Will Grow Exponentially Regarding the relationship between training and inference, Huang made an important clarification:

- The Value and Difficulty of Inference: There was a misconception that inference is easy, but the reality is inference is “super hard” and growing harder, as it's when AI truly thinks and works. Huang expects that in the future, 99% of compute power will be used for inference, as this is where Tokens are truly converted into economic output and real value in healthcare, manufacturing, finance, and other industries.

- The Evolution of Training: AI model training has not ceased; it's progressed from "pre-training (rote memorization)" to "post-training (skill learning, tool usage, reinforcement learning, etc.)." With the addition of multimodal and physical-world interaction data, future post-training compute requirements may be millions to billions of times greater than pre-training.

So I hope you understand what that $1 trillion is. By definition, it will continue to grow. By definition, compared to the benchmark I’m referencing, it will keep growing and get bigger than that number. I also want to say a few things. Again, last year was a very good year because fiscal year 2025 is our year of inference. I think we helped everyone understand the relationship between the price of computers and the cost of a Token. Only at the margin are a computer’s price and a Token’s cost related. The price of a computer and the cost of a Token. Remember, people buy these computers to produce Tokens. The efficiency in producing those Tokens is crucial. They're not reselling the computer. If you buy a computer that’s expensive and you just flip it, then yeah, it’s expensive. But if you buy an expensive computer, it’s because the technology is incredible and it produces Tokens at such an amazing rate. So while you’re buying the most expensive computer, you’re producing the lowest-cost Tokens. Does that make sense? This is what we do every day. That’s our job. That’s how we deliver the value we deliver. That value difference. The two numbers I just described are exactly how we ensure gross margins. We have to deliver outcomes. And we relentlessly, consistently deliver so much value, measured by the number of Tokens generated per second—that’s the foundation of our delivered value. Each generation, we deliver so much more value that customers are willing to pay more for the next generation rather than less for the current one. They’re willing to upgrade immediately. When Vera Rubin comes, it makes more sense to install Vera Rubin than to keep buying Grace Blackwell. Are you following? Someone nods—okay, because the value is better. Even if the price is higher. So I compare those two systems because they are the de facto two standard systems in the world. Before you can beat those two systems, it makes no sense to buy anything else. And those two systems are incredibly hard to beat.

Because Moore’s Law can’t give you a 35x improvement. So Moore’s Law alone is not enough. Making a faster chip is not enough. You’d have to make a whole lot of faster chips. So, last year was our 2025—our inference year. I think we showcased our leadership in inference and training. Now inference. And the other great things we did last year: we increased our coverage. We increased the number of AIs now supported on our platform. Last year, that is, 2025. We added Anthropic, which is new. We added Meta SSL (note: possibly a mis-transcription, likely referring to a Llama-related model), which is new. We are continuing to work with Meta on everything else. Meta SSL is brand new, and they have brand-new compute needs. We can all acknowledge that last year open-source software, open-source models really took off. To the level of providing API inference service providers, as of now. Open-source models probably represent… the second most popular AI models. The bigger ones. Number one, of course, is OpenAI. In terms of total Tokens generated, open-source models are number two. As you know, Nvidia is the world’s best platform for open-source models. We are the standard for open-source models, everywhere. So number one is OpenAI. Number two is all open-source models. Number three is Anthropic. Number four is xAI. Grab your lists, keep going. I think Nvidia’s model coverage significantly increased last year, which explains our accelerating growth on a huge base. As you know, we’re already a very large company, but we’re now accelerating. Our growth is actually accelerating. So anyway, that’s my thought. Oh, lastly. We love our hyperscaler partners, we work very, very closely together, but it’s important to understand that our relationship with hyperscalers is not just selling to them. We bring customers to them. Having CUDA in their cloud brings all CUDA developers, all AI-native enterprises, all the big companies working with us—whenever we help those big or small companies accelerate, we bring them, we run their workflows there, and get them hosted at CSPs worldwide. We are one of the world’s best CSP sales teams. That’s why if you go to the show floor, they have the biggest booths. AWS has the biggest booth here. Google Cloud has the biggest booth here. Azure has the biggest booth. Oracle has a huge booth. CoreWeave has a huge booth, too. Does that make sense? Because we bring them customers. Why are they here? To sell to my developers. All our developers only know how to program one thing. They only know to program CUDA, and they only use CUDA libraries. When we win customers, when we help these developers integrate Nvidia, they end up landing at one of our CSP partners. We are one of the best sales forces for CSP, period. However, we also see tremendous diversity of customers beyond CSP. Regional cloud, industrial on-prem. Dell, Lenovo, and HP—growing so quickly, and all the ODMs growing so fast. Much of that business goes to the right side of that chart, the 40%. Most people see us for the left 60% of the business. Without Nvidia’s full-stack technology, without us being able to build you an entire AI factory, without every open platform in the world running on Nvidia, you have no shot at that 40% of the market. So the core of that chart is, most of the left 60% is Nvidia developers landing in the cloud. And 100% of the right 40%—it’s impossible unless you have end-to-end, full-stack technology. Did I make that clear? It’s important to understand our business. We aggregate all of this and call it accelerated computing. Maybe it’s not helping you understand, so next year we’ll split it a bit differently. Okay, in the future, we’ll break it down differently. It might look like that chart—you’ll see. Something like hyperscalers making up 60%. Even if you see that, remember that many of those customers are ones we bring to the cloud. Then on the right, the 40% is completely impossible if you’re just making a chip. Because they don’t buy chips—they buy platforms. All three are on one slide. Maybe that blows your minds. That’s why I repeated it. Did that help? You know what I should do? I should do three panels or three slides. That would be a seven-hour keynote. But it would be worth it. Alright. That’s all. Thank you. Now we’ll open it up for questions. Hi.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

Fold Misses Q4 Revenue, Bitcoin Treasury Remains an Overlooked Upside Catalyst

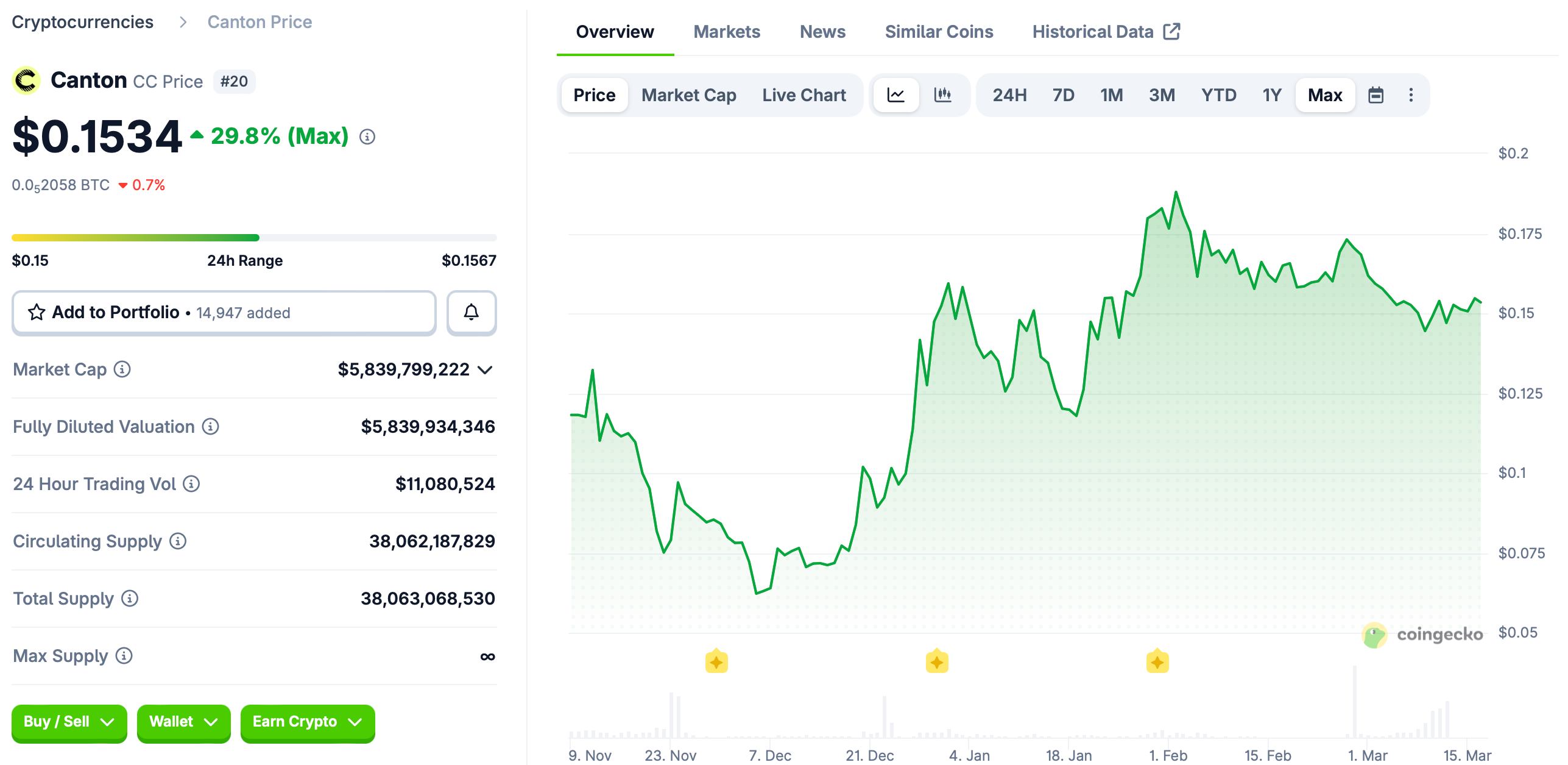

Moody’s brings credit ratings onchain with Canton Network integration

DocuSign's Strong Competitive Edge and Expanding IAM Strategy Present a Value Opportunity at Yearly Low Prices

Kestra's Earnings Beat Masks Institutional Divide as FMR Buys, Citadel Sells