Google Cloud Next Conference Highlights: AI Agents Enter Large-Scale Adoption, Inference Chips Become an Independent Growth Curve

Google’s annual cloud computing conference, Cloud Next 2026, delivered a clear signal: the enterprise AI battlefield has shifted from “how to experiment” to “how to govern and scale deployment,” and Google’s answer to this challenge is a full vertical stack from chips to platforms. This conference was more than a product launch—it signified that agentic AI is crossing the critical threshold from proof-of-concept to enterprise-grade production deployment.

According to Trading Desk, JPMorgan analyst Doug Anmuth wrote after the conference: “This shift from experimenting to deploying may be the strongest evidence yet that agentic AI is crossing the proof-of-concept chasm and moving toward enterprise workloads.” Data from the demand side backs up this judgment: Google’s first-party models now process 16 billion tokens per minute via direct API connection, up sharply from 10 billion last quarter; about 75% of Cloud customers are using its AI products; and Gemini Enterprise’s paid monthly active users grew 40% quarter-over-quarter in Q1.

Three institutions—JPMorgan, BofA Securities, and Citi Research—all maintained their Buy ratings on Alphabet after the conference, with target prices of $395, $370, and $405, respectively. Their shared logic: Cloud revenue is consistently growing faster than the advertising business, and the combination of the “Gemini model + self-developed TPU + enterprise orchestration platform” is building a differentiated moat, which is likely to become a more direct share price driver. Meanwhile, Sundar Pichai announced a capex range of $175–185 billion for 2026 in his keynote, and the market remains highly attentive to the capex trajectory around earnings windows.

Enterprise client concerns have changed: From “how to try” to “how to manage”

If Cloud Next over the past two years was a window to showcase technical prowess, this year’s main focus has shifted to how to turn AI from experimental deployments by a small group of early-adopter enterprises into production workloads that can operate at scale, be well-governed, and have controllable costs.

JPMorgan’s report outlines this evolution: In 2024, emphasis was on Gemini’s integration with Workspace and early agent exploration; 2025 will highlight A2A protocol and the seventh-generation TPU Ironwood; and by 2026, key themes like Agentic Cloud, data usability, AI infrastructure cost efficiency, and security all converge on one result—the transition of agents from pilots to sustainable production deployment.

Citi Research analyst Ronald Josey put it more directly: As managers begin “to manage multiple Agents across workflows,” enterprises are moving from “just using models” to “having Agents reshape processes,” and Google Cloud’s bet is precisely on this migration, positioning itself as the “key operating system of the agentic enterprise.”

This context also explains why the announcement was dense with information in two areas: computing power and network architectures for agent workflows, and the upgrade of the platform into an “agent factory.” Google chose not to announce any financial updates at the event, demonstrating instead through customer usage that products are running in actual production environments—including that about 75% of Google’s new internal code is now AI-generated and engineer-reviewed, and time to mitigate security threats has been reduced by more than 90%.

TPU Generation 8: Inferencing split from training and becomes an independent capital narrative

The most structurally significant hardware change at this conference was the splitting of TPU Gen 8 into two distinct product lines: TPU 8t for high-throughput training workloads, and TPU 8i, positioned as a “from-the-ground-up inference-optimized” specialized chip for real-time inference.

This “forked architecture” was most clearly explained in JPMorgan’s report: TPU 8t uses a new Virgo Network fabric to scale clusters to over a million chips per cluster, delivering peak performance about three times that of the previous Ironwood generation, targeting the reduction of training times for cutting-edge trillion-parameter models; TPU 8i features a new boardfly network topology, about three times more on-chip SRAM, and is primarily aimed at overcoming the latency and memory bottlenecks encountered by agentic inference at scale. Citi’s report complements this with efficiency metrics: TPU 8i has about one-fifth the latency of TPU 7 and improves performance per dollar by approximately 80%.

JPMorgan’s reasoning is notable: Since inference no longer “reuses training chips” but instead requires specialized ASICs for optimization, this means Google believes inference compute demands are now large enough to justify dedicated silicon and capital investment. As a result, revenue opportunities also undergo a structural change—they will not only follow training workloads, but increasingly come from the ongoing consumption on the inference side, forming an independent growth curve.

It is worth noting that all three reports mention that Google management did not discuss the possibility of selling TPUs externally at the conference, meaning that this hardware line currently serves primarily the “self-use plus cloud service sales” logic and has not yet evolved into an independent hardware commercialization story.

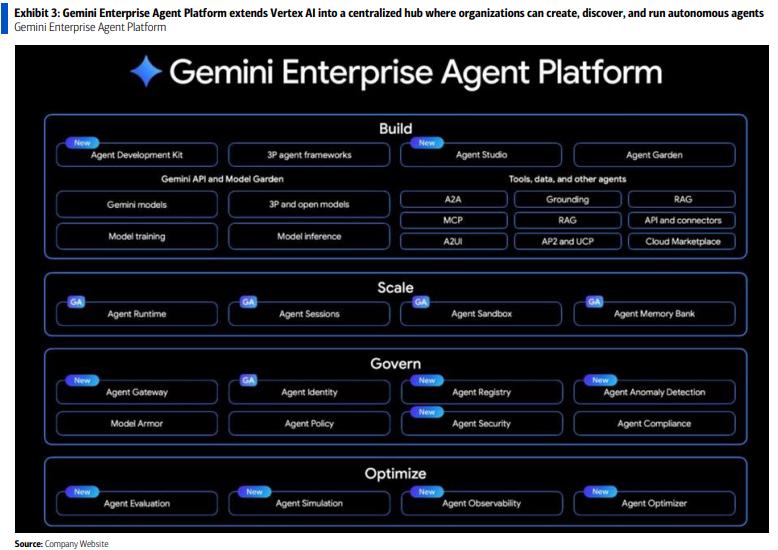

Restructuring the platform layer: Vertex AI “upgraded” as the unified governance portal for enterprise Agents

Beyond hardware, the restructuring of the platform layer was another structural change worth noting at this event. Google launched the Gemini Enterprise Agent Platform, which JPMorgan described as effectively “superseding Vertex AI”—consolidating enterprise building, orchestration, governance, and security into a unified entry point rather than fragmented function modules.

BofA Securities breaks this restructuring into three levels. The infrastructure layer introduces AI Hypercomputer, integrating GPU/TPU, high-speed networking, storage, and optimization software into a single architecture covering the entire lifecycle from training to inference. The platform layer organizes capabilities around four dimensions: build/scale/govern/optimize—including low-code/no-code Agent creation, centralized management, cross-ecosystem orchestration (integrating Google Workspace, Microsoft 365, and third-party apps), and built-in observability and traceability. The application layer, through Workspace Intelligence, delivers agent capabilities deeper into Gmail, Docs, Chat, and other high-frequency work scenarios, enabling multi-step tasks across applications.

Citi’s report takes a different angle, emphasizing that the key value of the platform lies in “allowing enterprises to run multiple Agents within the same management framework.” In product philosophy, this means: the threshold for large-scale agent deployment now depends less on the enterprise’s technical depth, and more on whether the platform’s built-in capabilities are sufficiently standardized to let more enterprises skip custom engineering and directly deploy in production.

Google endorses “full-stack AI” with internal data as validation

No financial data was disclosed at the conference; instead, Google relied on internal quantifiable cases to support the narrative that “agents have entered production.” Citi’s report summarizes these cases into four dimensions:

In R&D, about 75% of new code is now AI-generated and engineer-approved; Citi provides a vertical comparison—about 50% in October 2025, and about 30% in Q1 2025, indicating rapid penetration. One code migration project was completed six times faster than a year earlier.

In marketing and content production, turnaround from concept to video material sped up by about 70%, with conversion rates rising about 20%.

On the security side, Google Cloud now automatically handles tens of thousands of unstructured threat reports every month, reducing threat mitigation time by more than 90%. Security is differentiated through integration with Wiz and Mandiant. Citi also notes that AI has compressed the “average exploit window” to “minus seven days,” meaning attacks often occur before patches are released, which further underscores the strategic value of automated security orchestration.

For customer service, YouTube deployed AI voice Agents in six weeks for call scenarios on NFL Sunday Ticket and YouTube TV; Citi highlights their low latency, high accuracy, and bilingual capability.

The common function of these cases in all three reports is to distinguish “real enterprise workloads” from “demonstration-style demos,” supporting the assessment that there is upside risk to Cloud’s quarterly results.

$175–185 billion capex range: it’s “no change for now,” not “a peak in sight”

Sundar Pichai’s keynote set a capital expenditure range of $175–185 billion for 2026, the only financial figure mentioned at the conference and a topic with relatively divergent views among the three reports.

JPMorgan’s interpretation is pragmatic: making this range public increases the chances next week’s financial statement guidance remains unchanged, and does not confirm that capex is at its upper bound. Their own estimates are $181 billion for 2026 and $226 billion for 2027 (about 25% year-on-year growth), about 12% above market consensus. The report also puts a reverse signal on the table: Amin Vahdat and Jeff Dean both emphasized at the conference that AI is still supply-constrained, suggesting that the capex trajectory “could still move higher,” and that “the range is not a ceiling.”

BofA Securities, on the other hand, directly includes Capex/FCF pressure in its downside risk list: AI investment drives up capex and lowers free cash flow, which is one of the most direct pressure points on profit margins.

All three reports agree: Cloud Next has answered the question “Does Google have agentic AI products and infrastructure?” The question for the coming quarters is whether these investments can deliver Cloud’s growth and profit margin expectations without significantly sacrificing cash flow.

Three investment banks maintain Buy, but each with its own risk focus

In terms of investment conclusions, all three reports maintain a Buy rating, but anchor points and emphasis vary.

JPMorgan keeps Overweight with a 12-month target price of $395, based on about 29x its 2027 GAAP EPS forecast of $13.51; the report names Alphabet as a “top overall pick,” supporting this not only on cloud, but also on continued Search and YouTube ad growth, expansion of non-ad businesses, and Waymo’s optionality value.

BofA Securities maintains Buy with a $370 target based on 27x 2027 core GAAP EPS plus per-share cash; the report keeps increasing Cloud’s SOTP weighting and provides a reference of a $1.2 trillion contribution at a 10x revenue multiple, arguing that cloud margin expansion and AI asset monetization provide room for higher multiples.

Citi Research maintains Buy with the highest target at $405, about 29x 2027 GAAP EPS of $13.92; the premium is attributed to two drivers—accelerating Google Cloud revenue growth from TPU and Gemini demand, and search business resilience backed by strong query growth.

On the risk side, all three reports flag intensifying AI competition and potential search traffic diversion, with JPMorgan and BofA Securities also highlighting EU DMA compliance pressure; BofA points to “slower-than-expected LLM integration in search or negative impact on search revenue” as the biggest near-term uncertainty, with verification focusing back on Q1 results disclosed after the close on April 29.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

TRU (TrueFi) fluctuates 42.0% in 24 hours: trading volume surges driven by expected Binance delisting