Server CPU market to grow fivefold in 5 years! UBS: ARM is the biggest beneficiary, followed by AMD, and lastly Intel

In the AI era, while GPUs have taken all the limelight, CPUs may be quietly ushering in their own explosive growth.

According to Chasing Wind Trading Desk, on May 5, the UBS Global Research Team released an in-depth report on the U.S. semiconductor industry. Faced with intense investor inquiries about "how agentic AI will impact the server CPU market," analysts Timothy Arcuri and others conducted interviews with various industry experts and combined bottom-up and top-down models to arrive at a clear conclusion:

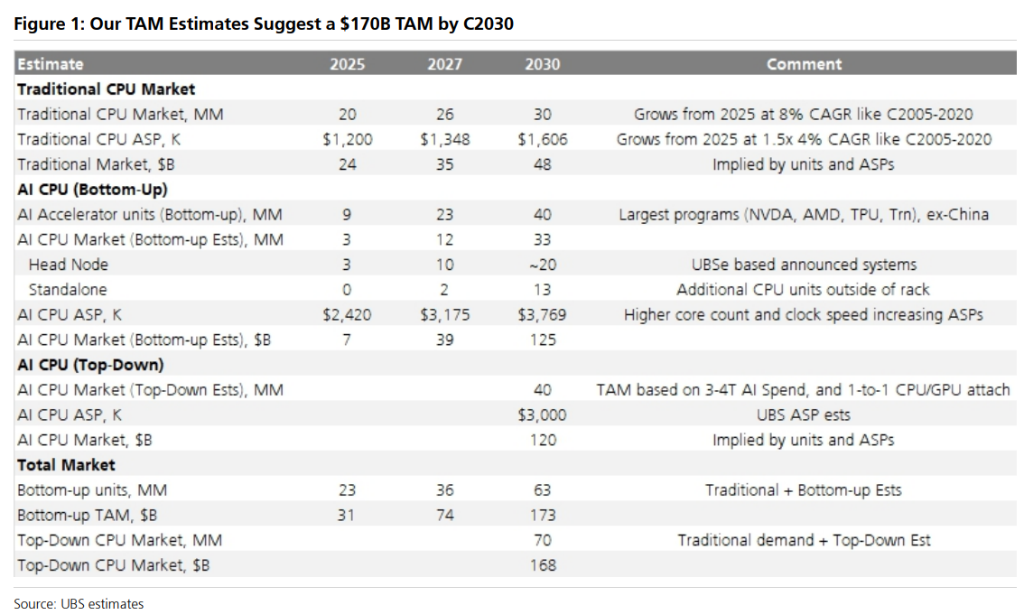

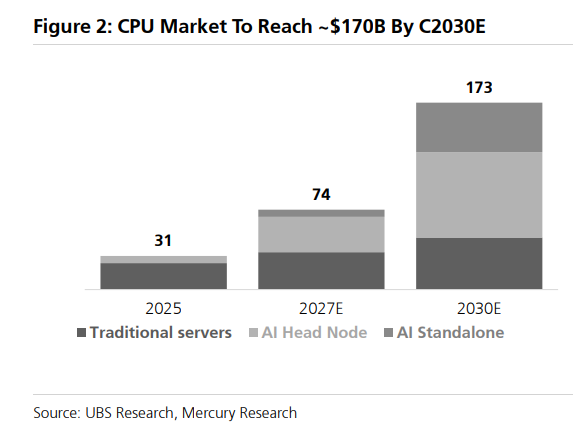

The market has severely underestimated the value of CPUs in the AI era. The total addressable market (TAM) for server CPUs will grow from about $30 billion in 2025 to about $170 billion in 2030, an increase of nearly 5 times in five years.

Over the past two years of AI frenzy, GPUs have stolen all the spotlight. But as AI evolves from "simply generating conversations" to "agentic execution of tasks," the bottleneck in computing power is quietly shifting.

Agentic AI Reshapes Compute Landscape: From “GPU Dominated” to “CPU Resurgence”

To understand the boom in the CPU market, one must first grasp the workload differences between agentic AI and traditional AI.

In traditional AI training and base inference, GPUs are the absolute workhorse. If AI’s computing power is likened to a factory, the GPU is the tireless worker on the assembly line, while the CPU acts as the manager assigning tasks. In the standard model, one manager (CPU) can easily oversee several workers (GPUs).

But agentic AI changes the game. Agentic AI needs not only to generate text but also to orchestrate tasks, call tools (such as executing code in a sandbox VM), conduct file retrieval, and more. This means the “manager’s” workload rises exponentially.

Analysts obtained striking data from expert interviews:

-

Shift in Workload Focus: Experts noted that "in traditional AI workloads, 70-80% of the compute is spent on inference itself (GPU); but in agentic inference, this ratio is reversed, with 70-80% of the work shifted to the CPU."

-

Explosion in Core Ratios: In traditional AI training, each GPU typically only needs 8-12 CPU cores; in base inference, 16-24 cores are required; in agentic AI, each GPU needs 80-120 CPU cores. Essentially, the number of CPU cores required per GPU in agentic scenarios is 5-10 times that of traditional training scenarios.

-

Concurrency Pressure: "An agent (and each sub-agent it spawns) may require 1-4 CPU cores, while a complex task may require creating 10-100 sub-agents."

This fundamental shift directly breaks the old compute architecture of being "GPU-heavy, CPU-light," opening up tremendous incremental room for the CPU market.

$170 Billion: A Massive Market

Based on this logic, the analysts have recalculated the total server CPU addressable market (TAM). The result shows that by 2030, this market size will reach around $170 billion.

How was this massive figure arrived at? The analysts used both "bottom-up" and "top-down" methods for cross-validation:

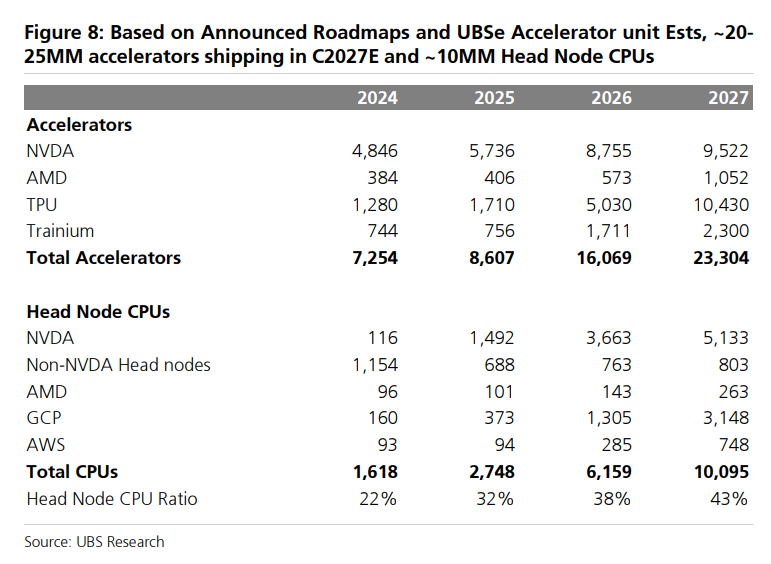

1. Bottom-up Estimate: Based on U.S. hyperscalers' accelerator models, analysts predict that by 2027, the market will ship about 23 million accelerators (XPU) and about 10 million head node CPUs. As agentic AI develops, accelerator shipments will reach about 40 million by 2030. More importantly, the CPU-to-GPU ratio will shift from today's 1:4 to 1:2 or even 1:1. In addition, given AI applications require CPUs with higher core counts and frequencies, AI CPU average selling prices (ASP) will rise significantly. For example, NVIDIA’s 144-core Grace CPU may be priced between $3,000 and $4,000. With both quantity and price rising, the AI CPU market alone will reach $125 billion.

2. Top-down Estimate: Analysts referenced NVIDIA’s prediction for a 2030 AI TAM of $3-4 trillion. They estimate about 40 million XPUs shipped in 2030. Assuming average XPU ASP rises to $3,000, and with a CPU ratio of 1:1 or 2:1, they derive an AI CPU market size between $120 billion and $200 billion.

The analysts divide the future CPU market into three main segments:

-

Traditional Server Market: Maintaining steady growth, with an estimated 44 million units shipped by 2030.

-

AI Head Nodes: Bundled with GPU racks, mainly responsible for orchestrating tasks and optimizing GPU utilization.

-

AI Standalone Racks: Pure-CPU servers specifically handling agentic AI tool invocation and sub-agent concurrency.

As the pie grows, the key question is how it will be divided.

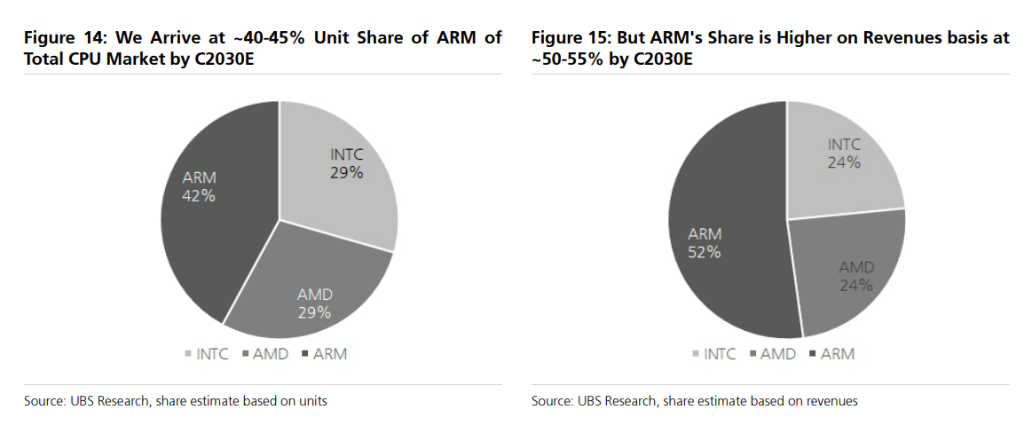

The analysts clearly rank the beneficiaries: ARM is the biggest winner on the server CPU side, followed by AMD, and then Intel — but all three will benefit.

ARM: Market Share Soaring from 15% to 40-45%

In 2025, ARM architecture will account for about 15% of server CPU market units. The report forecasts that by 2030, this number will rise to 40-45%; if calculated by revenue, thanks to higher AI CPU ASP, ARM’s revenue share could reach 50-55%.

Where is ARM’s edge?

The analysts quote experts: ARM architecture offers about 30% higher power efficiency and 20-30% higher memory efficiency, and, with smaller core designs, has clear advantages in latency and cost. Crucially, top hyperscalers such as NVIDIA Grace, AWS Graviton 5 (192-core), and Google’s in-house CPUs all use the ARM architecture.

UBS expects that by 2030, ARM will capture more than 75% of the head node CPU market.

However, ARM has its shortcomings. The report notes that ARM has traditionally been a single-threaded architecture, and SMT (Simultaneous MultiThreading) capabilities are only recently being developed; in high-core scenarios, inter-core interference and software compatibility challenges remain; also, ecosystem maturity needs improvement, and some software stacks may not be perfected until around 2028.

Based on the above, the analysts raise ARM’s 12-month target price from $175 to $245. As of the day before the report’s publication (May 4), ARM's share price was $203.26, maintaining a "Buy" rating.

AMD: High Core Count + Multi-threading, the Best AI Partner

AMD's strength lies in high core counts and multi-threading capability, closely matching the agentic AI need for CPUs that are "fast and numerous."

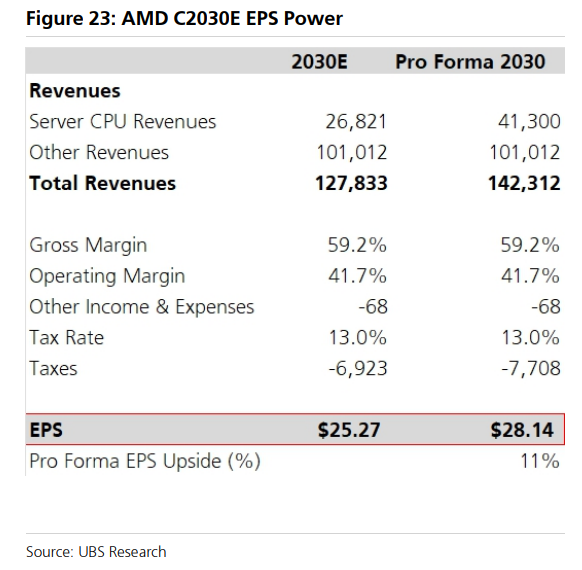

The report cites AMD’s statement from its Analyst Day in November 2025: AMD expects the server CPU market to grow from $26 billion in 2025 to about $60 billion in 2030, with AI-driven CPUs accounting for about 50% of the 2030 market; AMD anticipates its own share will exceed 50% of the total market.

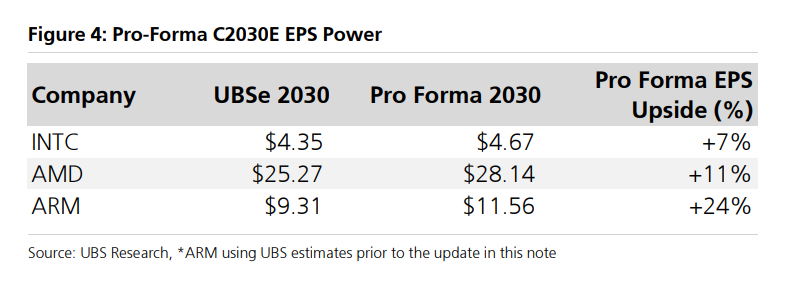

Currently, analysts forecast AMD’s 2030 EPS at $25.27. If the market develops as expected, revised 2030 EPS could reach $28.14, an upside of about +11%.

Intel: Stable Foundation, But Faces Significant Catch-up Pressure

Intel’s situation is relatively complex.

In the traditional server market, x86 architecture will retain about an 85% share, and Intel still has advantages in tool invocation, storage optimization, and other specific workloads. However, in the AI head node market, Intel’s presence is quickly being squeezed by ARM.

UBS points out that Intel is relying on the "Coral Rapids" product line to narrow the gap with AMD and ARM, but currently AMD and ARM have more advantageous positions in the AI CPU market.

Still, Intel has a unique card: the spillover effect from the PC side. As agentic AI offloads more tasks to local devices (Anthropic’s Claude Code is already using this approach), the PC upgrade cycle is set to be catalyzed, benefiting Intel.

The analysts estimate Intel's 2030 EPS upside revision at about +7%, the lowest among the three companies.

Not All CPUs Are Equal: The Trade-off Between Latency and Throughput

The report also delves deeply into an often-overlooked detail: agentic AI’s demands for CPUs are not simply a case of "more cores equals better."

Hyperscalers face a fundamental trade-off in hardware selection:

-

High core count CPUs: High overall throughput and strong energy efficiency, but lower clock speeds, higher latency, and limited software scalability (most software can’t efficiently utilize hundreds of cores)

-

Low core count, high frequency CPUs: Low latency and fast response, suitable for “head node” roles (orchestrating tasks, optimizing GPU utilization)

In practice, hyperscalers prefer a layered architecture of "head nodes + large-scale compute nodes": the former handles low-latency orchestration, the latter is responsible for high-throughput parallel execution.

This means that providers offering a broad SKU range (covering various core counts, frequencies, and power profiles) have a competitive edge over those betting on a single “strongest” configuration.

UBS also highlights that the core purchasing metric for hyperscalers isn’t peak performance, but rather the number of transactions per watt, with memory configuration as the key design variable.

Cloud or Edge? An Unresolved Variable

Analysts also highlight a variable worth noting: the division of labor between cloud and edge computing.

Early agent deployments relied almost entirely on the cloud, but more system designs are beginning to shift computation to local devices — 5 to 10 parallel tasks can run directly on local files and data, reducing latency and cutting cloud compute costs.

UBS, citing expert estimates, notes that broader local execution could reduce the cloud agent workload's required CPU capacity by about 25%.

This means that the multiplier effect of agentic AI on data center CPUs might ultimately be compressed from 5-8x down to about 4x. At the same time, demand for CPUs in the PC space will rise, benefiting both AMD and Intel.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

STORJ surges over 40% in 24 hours: trading volume skyrockets more than 10 times amid rising AI/DePIN narrative

Notcoin (NOT) fluctuates 40.2% in 24 hours: TON ecosystem synergy and range breakout drive rebound